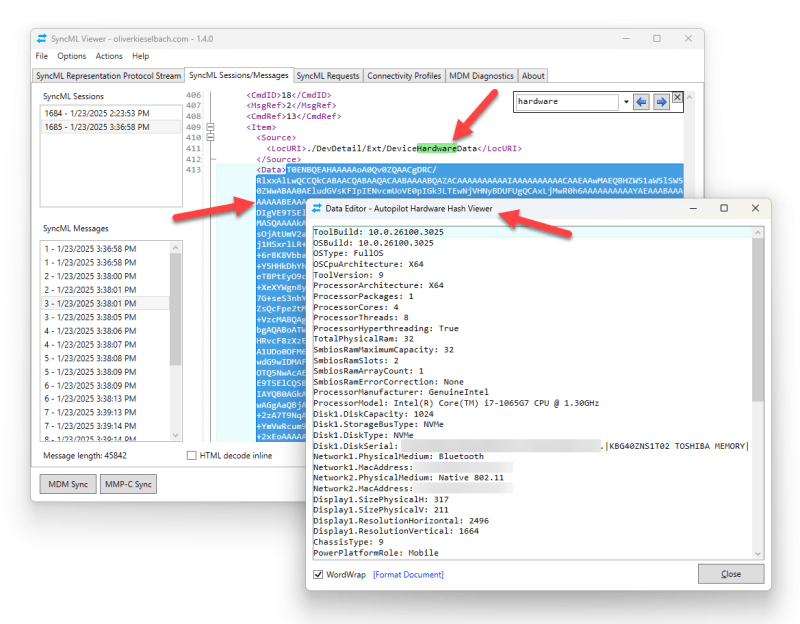

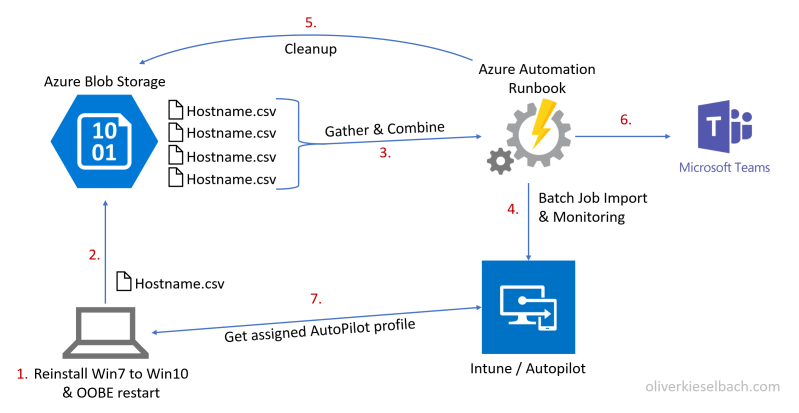

It’s been a while since I updated the Autopilot Manager solution but here we go with an update to support Windows Corporate Identifiers. Maybe a quick recap of what Autopilot Manger is. The idea is a more user friendly on-the-fly Autopilot hardware hash upload to the Intune tenant. Or with the new version publishing of…

Read More