Intune managed devices must be configured to leverage Delivery Optimization (DO) to reduce the overall internet bandwidth usage. It is a distributed cache solution using peer to peer transfers for content downloads. It is a very well designed solution especially for the cloud era. The latest addition to that concept is the so called Microsoft Connected Cache (MCC) formerly known as DOINC (Delivery Optimization In-Network Cache).

Let’s start with a short introduction into Delivery Optimization. As already mentioned it is designed for the cloud era which means it does not rely on peer discovery based on broadcast like BranchCache does. It utilizes the cloud (Microsoft Delivery Optimization Services) for peer discovery. The cloud tracks peers based on a defined grouping strategy. This way clients requesting content can get peer lists belonging to their group.

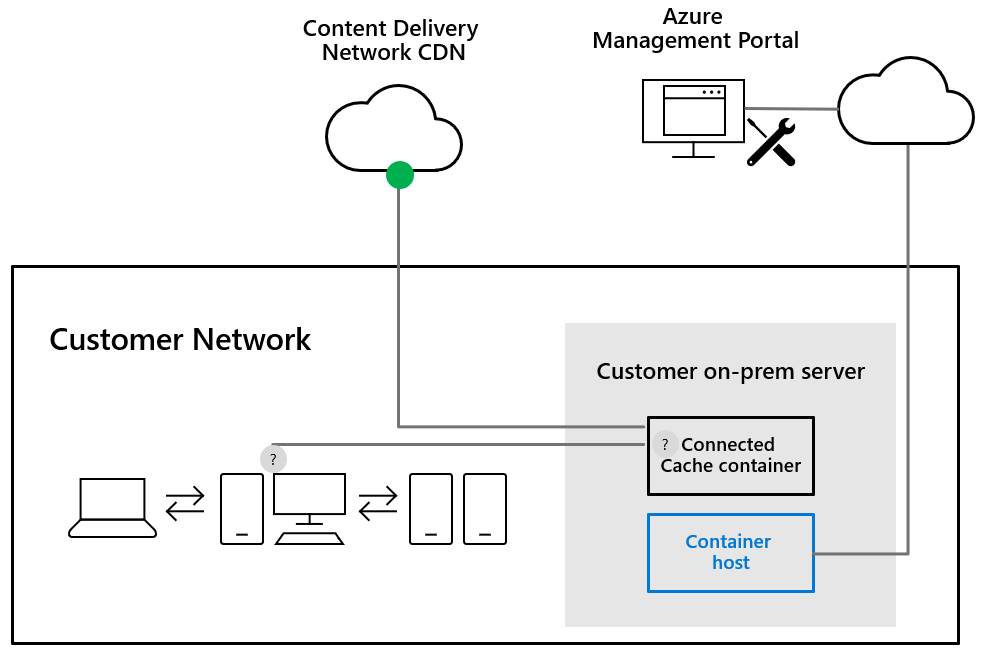

- Microsoft Intune configures Delivery Optimization (DO) settings on the devices, including the Microsoft Connected Cache (MCC) server name (DOCacheHost)

- Device A checks for Windows Updates and gets the address for the content delivery network (CDN)

- Device A requests content from the MCC

- If the cache does not have the content, the MCC gets the content from the CDN

- If the MCC is unavailable or fails to respond, the device downloads the content from the CDN

- Devices A,B, and C still use DO to get missing pieces of content from their other peers

Okay clear, the MCC acts as a transparent proxy to cache content.

Which content is supported?

- Windows Updates

- Feature Updates

- Quality Updates

- Driver Updates

- Office 365 ProPlus (Setup and Updates)

- Microsoft Store Apps

- Intune Win32 Apps

- Microsoft Edge (Setup and Updates)

Looks like a complete list, right? Actually for an Intune managed device this should represent the complete list of content you have to deal with regularly. If we get the DO implementation right we can enjoy great working peer to peer content delivery supported by an additional cache server.

Where do I get the MCC now?

During time of writing this article the MCC can only be installed on a Configuration Manager Distribution Point.

I won’t get into details about the MCC installation for Configuration Manager here, my MVP fellow Peter van der Woude wrote a good blog post about it here: Microsoft Connected Cache in ConfigMgr with Win32 apps of Intune, you can just follow his instructions to get the MCC up and running.

At Ignite 2019 they announced the Microsoft Connected Cache managed in Azure, which is a standalone version of the MCC. Meaning it can be installed without ConfigMgr and is based on a lightweight Linux container. This is a major announcement in my opinion as it can probably deployed easily and may run on a lot of devices finally. During writing, this was only available as private preview and not for public preview to test this out. The biggest advantage on using a Linux container means, we don’t have to pay additional licenses to spin up a MCC device. If we want to deploy a MCC later it should be fairly easy to find a device which can run Ubuntu Server 16.04. Of course it is also supported on Windows Server 2019. These are the two supported OSs at the time they announced it at Ignite 2019. I assume more Linux distributions will follow as soon as it is released public. If you like to learn more about the MCC, I really suggest to watch the great Ignite 2019 session “Stay current while minimizing network traffic: The power of Delivery Optimization” from Narkis Engler and Andy Rivas (thumbs up!).

Looking at a possible enterprise setup with DO and MCC for cloud-only Intune managed devices

I did various implementations for Delivery Optimization in cloud-only environments. I’m going to use my typical DO setup which relies on DHCP option to distribute the DOGroupID and add a MCC to the design. As I’m not able to use the standalone Linux container version I will use a MCC on a ConfigMgr Distribution Point (DP). To be clear I will not use a co-managed device, the device will still be a cloud-only device, managed by Intune standalone. I will just point the DO setting DOCacheHost to the MCC of the ConfigMgr DP with the enabled MCC. This way the MCC will behave the same as a standalone version later. No ConfigMgr client will be used to update the DOCacheHost setting based on the Boundary Groups.

Why do I want to test this out?

What I’m especially curious about is the DHCP option to build a dynamic value assignment of DOCacheHost. Similar to the ConfigMgr DOCacheHost value assignment with the help of the boundary groups concept (see ConfigMgr docs here).

At the mentioned Ignite session there was the information that the DOCacheHost will be configurable by DHCP Option ID, like we already have for the DOGroupID. See the copied slide from the Ignite session:

Finally the Windows 10 version 20H1 release is scheduled for March/April 2020 and most of the features should be in a final state in the latest Insider version right now. This lets us build a fully functional lab environment with an Insider Windows 10 VM, MCC on ConfigMgr used same way like a standalone installation, and DOCacheHost value distributed via DHCP option ID 235 instead of the static list available in the current Intune DO configuration profile:

Prerequisites

As already mentioned we need some components for this:

- ConfigMgr Distribution Point with MCC installed

- Windows 10 Insider VM (I used the build 19577)

- Microsoft DHCP Server with pre-defined option ID 235

- Microsoft Intune DO configuration

Gathering the missing MDM config info to do the actual configuration

First, I installed the Windows 10 Insider VM and had a look at the new DO GPO settings. Bingo, here I found the indicator of the new DOCacheHostSource setting using the DHCP option 235:

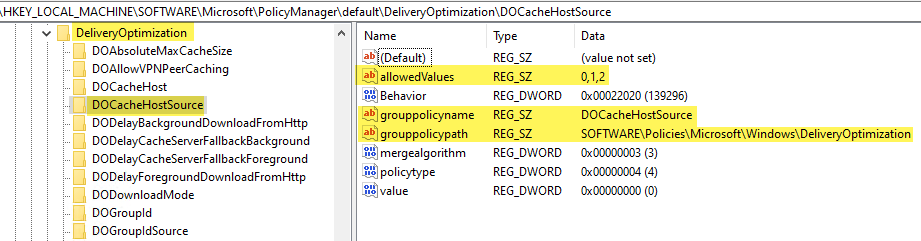

By looking into the registry we can verify the according MDM setting for DOCacheHostSource:

I constructed the custom configuration profile by building the OMA-URI and setting the value to 2 to use the Cache Server Hostname delivered by the DHCP option 235 instead of a configured DOCacheHost value in the Intune configuration profile:

OMA-URI: ./Vendor/MSFT/Policy/Config/DeliveryOptimization/DOCacheHostSource Value: 2 (Integer)

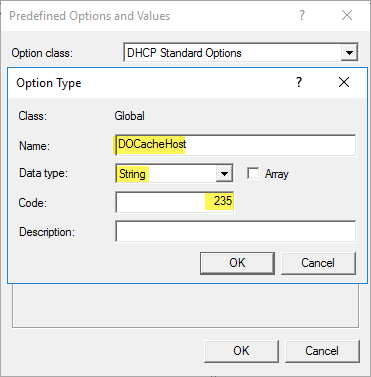

Next, I needed to setup the DHCP option ID 235 like my regular DHCP option ID 234, which I use to distribute the DOGroupID to my clients. I added an additional predefined option:

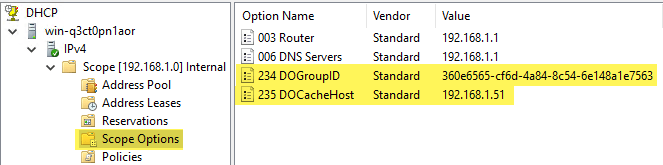

Then I configured on the DHCP scope a new scope option by adding the new predefined option with the IP of the ConfigMgr DP/MCC in my case the 192.168.1.51:

In my lab I use also the DHCP option ID 234 for DOGroupID distribution. My DO config for the clients is Download Mode 2 (Group Mode) and the Group ID source DHCP user option:

This simple setup should provide dynamic assignment of DO group ID and DO cache server (MCC). This is the scenario I use the most, as it covers roaming users and provides most flexibility in grouping your devices cleverly together. Now it is time to start a test to see if my client gets configured and finally uses the DOCacheHost setting from DHCP.

Let’s start with the client MDM setting. Checking the MDM Diagnostics HTML Report I found my setting applied on the device:

I can see on the Intune service side that the policy is applied successfully:

MDM setting is working, now let’s have a look if we can trace if the DO config is actually used by the Intune managed device during content downloads. Luckily Microsoft extended the DO PowerShell cmdlets and also added more information in their generated output. So, I started Windows Update and a Microsoft Store download and had a look at the log files.

We can use the following command to get the last 100 log file messages for Delivery Optimization:

Get-DeliveryOptimizationLog | select Message -Last 100

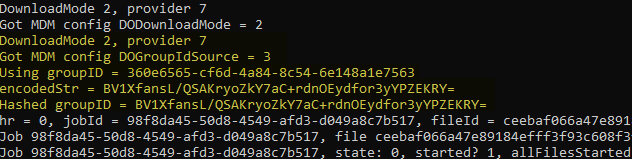

after a quick look I spotted this:

This is a clear indicator that my MDM setting is recognized and used by the DO components. What made me very happy is the following lines I spotted in addition:

Here we can see the GroupID is also provided by DHCP and the DOGroupID is actually listed there as clear text. This is a major enhancement for troubleshooting and analysis. In the past this value was encoded and it was always cumbersome to deal with it during troubleshooting. Thanks for that!

Finally I’m also glad about the new PowerShell cmdlet:

Get-DeliveryOptimizationLogAnalysis [-ListConnections]

For a quick summary check on the client this is very helpful, here the output of my lab Insider VM:

In addition you find two more cmdlets for DO which are super helpful:

Enable-DeliveryOptimizationVerboseLogs Get-DeliveryOptimizationStatus -PeerInfo

If you like the graphical representation of DO statistics you can also have a look at the Windows Update Delivery Optimization Activity Monitor in Settings. Microsoft added a separate category for the Microsoft Cache Server:

You can read about some more network throttling enhancements in Windows 10 Version 20H1 here in the section Delivery Optimization:

The setup is done and working, I could easily see improved content download times with a second VM. The MCC and the dynamic assignment is working as expected. I’ll probably update the blog post as soon as we get the MCC as Linux container to include the details of the install and Azure portal reporting. For know, I’m happy to see that we can have dynamic DO settings assignment via DHCP and can also use existing ConfigMgr DPs if they have installed the MCC component. The way of distributing the DO settings via DHCP options gives enough flexibility to control your grouping to have always enough peers in one group and prevent possible WAN traffic in you internal network. Roaming users can instantly benefit from a MCC on a larger site and still use normal DO peer to peer as it works in parallel.

For me this is something I’m looking forward to. Taking care of bandwidth utilization is a very important part of every modern management project and DO is the key technology here. I hope this helps people understanding DO a little more and demonstrates that a very flexible DO concept can be implemented.

For some Microsoft recommendations about Windows servicing and Delivery Optimization settings I can recommend to have a look here:

Real-world practices to optimize Windows 10 update deployments

https://techcommunity.microsoft.com/t5/windows-it-pro-blog/real-world-practices-to-optimize-windows-10-update-deployments/ba-p/1227825

Happy caching and bandwidth optimization!

Hi Oliver,

Do you know powershell commands for enabling MCC to all DPs?

Regards,

Joan

Hi Joan,

according to the docs there is a API exposed through the SDK. Here a starting point: https://docs.microsoft.com/en-us/mem/configmgr/core/plan-design/hierarchy/microsoft-connected-cache#automation

I did not played around with that, but here I would start.

best,

Oliver

Thank you Oliver. I will check this and let you know what I can find.

would it be possible to configure Intune DO to no peering between clients and only gets the content from MCC servers ?

Hi Ahmed,

MCC works in parallel to P2P, but if the MCC has enough power and responds to the requests well, I think you will not have much P2P traffic when the MCC is in place.

best,

Oliver

Sorry, I think I found it from DP registry. Thanks

Thanks Oliver, one more question. is configuring DHCP option is mandatory? what if i want to use HTTP blended with peering behind same NAT (1) with Connected Cache ?

Hi Ahmed,

no absolutely not, I chose it to demonstrate because it was new at this point in time. You are fine with Download Mode 1 if it fits your environment.

best,

Oliver

Thanks Oliver, where can I find DOgroupID to configure my DHCP option 234?

Hello Oliver,

Does this solution is supported in 20H2 ? I tested it but during the DHCP Request the client doesn’t request information for option 234/235.

Any Idea ?

Thank you

Hey Guillaume,

Yes, it is supported in 20H2. Did you enable verbose logging upfront (Enable-DeliveryOptimizationVerboseLogs) when you reviewed the log files?

best,

Oliver

Hello,

Yes verbose logging was enabled, it could be due to network issue I’m still troubleshooting, it seems in the initial DHCP Request client don’t ask for 234/235 information, after a DOSVC restart there’s DHCP frame with DHCP Inform type which request 234/235 options but my DHCP server seems to not receive it.

To be continued… 🙂

I’m not understanding something.What’s the point of the 234/GroupID distrubution besides for troubleshooting purposes?

The distribution of GroupID via DHCP Option gives the flexibility to group your devices for your needs. For example, if you like to span the DO group over multiple subnets and building for example, you could easily control to send out the same group ID to both buildings, resulting in a large DO group.

Thank you .So I have remote offices ,each on their subnets .my goal is to setup mcc so that wherever the device checks in ,they pull their local MCC.So I would setup 235/234 for each scope in DHCP right? Each with different groupID? Where else do setup the group ID besides in dhcp ? Do you have to do anything on Intune side? Also do you have to setup the GPO to use dhcp 235 ? Sorry just confused .Thank you.

Nevermind lol i get it now.I was overthinking the process lol Thanks man.

hi Oliver,

thank you for your efforts.

i have a question please; there is any alternative solution to use MCC without DP.

regards,

Right now there is only MCC with ConfigMgr, but there will be a stand-alone version available pretty soon. 👍

Any etas for the standalone version??

Nothing officially announced. I hope we will get something this summer but that’s only my whish 🙂

Are the DHCP settings still a requirement? Just curious as this article is essentially 2 years old already.

Thanks,

You can use the DHCP option to provide the Guid or restrict usage to a subnet, various options, but yeah it is still this way.

Hello,

Does someone managed to get it working with an Infoblox DHCP Server ? When we try with a 235 option the client can’t read the information. Infoblox support advised us to encapsulate the 235 option in a 43 option, in this configuration client can read the information but DO seems incapable to read the option and never try to use Connected Cache.

If someone have the solution, please share it 🙂

Thanks

Hopefully someone from the community has experience with InfoBlox DHCP, I don’t so I can’t provide any advice here.

Hi. For HAADJ clients is GPO the way to go? The device configuration workload is still in MECM only WUfB is on InTune. Thank you!

Actually, that depends on your way of management strategy. A lot of HAADJ clients are managed as regular domain joined devices so GPO is the way to go for them, but it is not a golden rule, they could also be managed by MDM. So, your choice.

For setting 234, do we need to select the GroupID as string or text, device log shows that it set GroupID but its not the one i provided in DHCP

As a string and the log is typically showing an encrypted version of the GUID.

Hi Oliver,

We have a situation where we have a one giant wi-fi VLAN/Subnet that is essentially bridged to dozens of sites. The idea is that we want to prevent peering on it but allow peering everywhere else and limit it per subnet. I was hoping to use DHCP Options 234 to set one GUID for our Wi-Fi clients and another for “everyone else”. However, when I look at Intune and select DHCP Option as my choice, I do not get an additional choice to specify a GUID. Is the fix for this to choose “custom” and specify a GUID there?

Thanks,

Sean

You can specify a GUID, you control it by the DHCP server. the client simply asks the DHCP server and he responds. So you can control it on DHCP server side. e.g. 5 networks, 4 should be in the same group and communicate between each other, then give them on the DHCP scope options the same GUID and the 5th one gets a different one.